Hi,

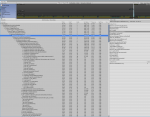

I'm trying to track down the cause of a lag spike that occurs when I enable the behavior tree on some GameObjects. It looks like it is caused by JSON serialization inside the `EnableBehavior` call but not sure why.

I am Instantiating the GameObject with `Start When Enabled` unchecked and then disabling it. Later I enable the gameobject with a trigger and call `BehaviorTree.EnableBehavior()`. This is when I see the spike. If I Instantiate and enable behavior when the scene loads I don't see the same GC Allocations

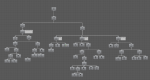

I've attached screenshots top get an idea of the size of my tree and variables along with Profiler info. I can send a Profiler data file if that helps. I just can't seem to attach in post

Also, I did try to detach the first node in the BT and replace with a single Idle task and I still had the same issue.

I'm using Unity 2018.3.6f1 on Win10 machine with latest Behavior Designer version

Any help is appreciated

I'm trying to track down the cause of a lag spike that occurs when I enable the behavior tree on some GameObjects. It looks like it is caused by JSON serialization inside the `EnableBehavior` call but not sure why.

I am Instantiating the GameObject with `Start When Enabled` unchecked and then disabling it. Later I enable the gameobject with a trigger and call `BehaviorTree.EnableBehavior()`. This is when I see the spike. If I Instantiate and enable behavior when the scene loads I don't see the same GC Allocations

I've attached screenshots top get an idea of the size of my tree and variables along with Profiler info. I can send a Profiler data file if that helps. I just can't seem to attach in post

Also, I did try to detach the first node in the BT and replace with a single Idle task and I still had the same issue.

I'm using Unity 2018.3.6f1 on Win10 machine with latest Behavior Designer version

Any help is appreciated